CASE STUDY SNAPSHOT

Customer : A leading automotive OEM / Tier-1 instrument cluster manufacturerProject vertical : Automotive

Challenge : Required high-precision, repeatable, and scalable validation of an automotive instrument cluster involving CAN signal simulation, physical button actuation, and accurate visual verification of display outputs.

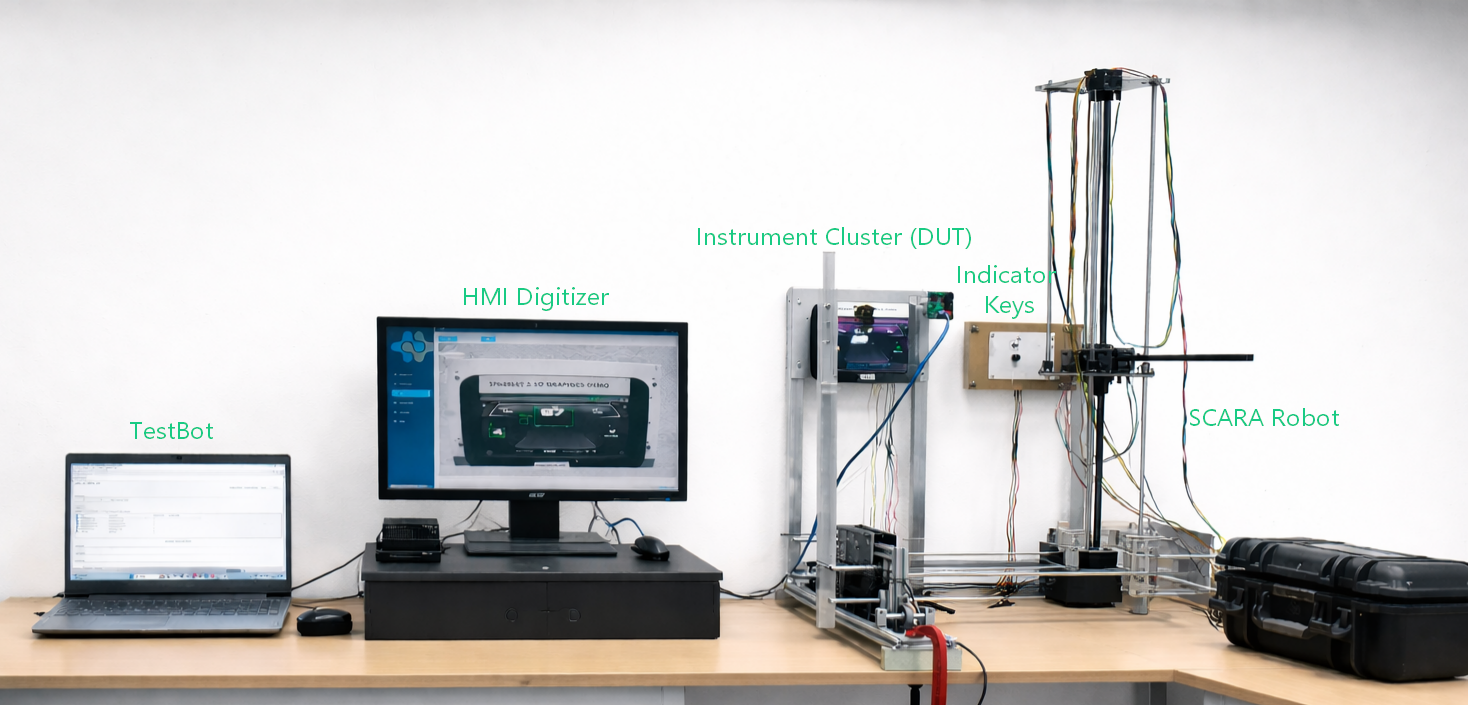

Solution : Leveraged TestBot’s multi-agent architecture combining CAN Agent, Panel Key Controller Agent (SCARA-based), and HMI Digitizer Agent (AI/ML vision on NVIDIA Jetson) to create a fully automated, synchronized validation pipeline.

Tools and Technologies :

- Framework: TestBot (Unified automated testing framework)

- Agents: CAN Agent, Panel Key Controller Agent, HMI Digitizer Agent

- Hardware Integration: SCARA robot with precision jig for panel key actuation

- AI/ML Platform: NVIDIA Jetson for real-time vision inference

- Protocol: CAN (DBC-driven), UDS Diagnostics

- Validation Model: AI-based widget classification with JSON output comparison

Customer Overview

A leading automotive manufacturer developed a next-generation instrument cluster responsible for displaying critical vehicle data such as speed, RPM, fuel level, warning indicators, ADAS notifications, and driver alerts.

As a safety-critical interface, the cluster must accurately reflect real-time vehicle states under all operating conditions. Even minor deviations in display behavior could lead to compliance failures or safety risks.

Challenge

The customer faced a complex validation problem spanning multiple domains:

- The instrument cluster depended on continuous CAN bus inputs from multiple ECUs, requiring precise and high-volume signal simulation.

- Display validation was manual, relying on human observation, leading to missed defects and inconsistent results.

- Physical panel key testing (trip reset, brightness, menu navigation) could not be automated using software alone.

- Regression cycles were time-intensive (2-3 days per build) and lacked repeatability.

- There was no structured audit trail - results were manually recorded without visual or data evidence.

In essence, validation required synchronization across network simulation, physical interaction, and visual verification, which manual processes could not reliably achieve.

TestBot Solution

The team implemented TestBot’s multi-agent architecture to create a fully automated validation pipeline. TestBot addressed this by orchestrating three specialized agents into a single, synchronized validation pipeline—two driving inputs and one performing deterministic output validation.

The CAN Agent acted as a software-defined ECU, injecting DBC-defined signals directly onto the cluster’s CAN bus. Each test case defined a complete signal state—for example, speed at 80 km/h, RPM at 3000, fuel at 10%, and turn signal active—which the agent encoded into valid CAN frames and transmitted with precise timing. It supported multi-signal injection, configurable transmission intervals, and UDS frames for diagnostic scenarios.

In parallel, the Panel Key Controller Agent enabled physical interaction through a SCARA robot mounted on a custom mechanical jig. The jig securely held the cluster unit while damping external vibrations. The robot’s end effector, fitted with a compliant silicone tip, replicated human press behavior. TestBot commands specifying button, press type (single, long, double), and dwell time were translated into motion coordinates, achieving actuation with ±0.1 mm positional tolerance. This eliminated variability in press force and timing, making hardware interaction fully deterministic.

Once the cluster reached the intended state, the HMI Digitizer Agent performed output validation. A high-resolution camera, mounted in a fixed calibrated position, captured the display. The image was processed on an NVIDIA Jetson device running a trained AI/ML model built on over 4,000 labeled cluster images.

The model analyzed each frame and returned a structured JSON output describing widget states, including:

- Speedometer and tachometer needle angles

- Fuel and temperature levels

- Odometer and trip values

- Gear position indicators

- Warning and fault icons (active/inactive)

- Turn signal and beam states

- ADAS notification widgets

The system achieved 98.7% classification accuracy at 720p under controlled lighting conditions, enabling consistent and objective validation.

Execution Flow

Each test cycle was designed end-to-end by TestBot.

The execution engine loaded the Excel-based test dataset and initialized all agents. For each test case, the CAN Agent injected the required signal set while the Panel Key Controller Agent executed any defined physical actions. After a configurable settling time—typically 300 to 500 milliseconds—the HMI Digitizer Agent captured the display and triggered inference on the Jetson device.

The resulting JSON output was compared against expected values using configurable validation rules. Results were logged at a granular level, with pass/fail recorded per widget.

Each execution produced a complete evidence set, including captured images, CAN logs, and inference outputs, all of which were archived and included in the final report generated in PDF and Excel formats.

Key Testing Areas Covered

The automation framework validated the instrument cluster comprehensively across:

- CAN-driven widget behavior (speed, RPM, fuel, temperature)

- Warning and fault indicator logic

- ADAS notifications and display states

- Physical panel key interactions (trip reset, brightness, navigation)

- Display mode transitions and theme changes

- Needle movement accuracy and latency

- Indicator blink frequency and timing characteristics

- Color compliance for critical visual elements

Results

The deployment automated over 400 test cases, achieving full regression coverage across all cluster functionalities. Execution time was reduced from two to three days to just 3.5 hours per cycle.

Manual effort dropped significantly, requiring only about 30 minutes of supervision compared to approximately 16 person-hours previously. More importantly, validation quality improved from subjective human observation to a consistent, measurable 98.7% accuracy rate.

Every test result was backed by objective evidence—camera frames, CAN logs, and structured inference outputs—creating a complete audit trail for traceability and compliance.

Key Defects Identified

- Fuel Warning Threshold Defect: Triggered at 9.5% instead of 10%

- Panel Key Timing Issue: Long press required 2.8s vs specified 2.5s

- Latency Issue: Speedometer response at 680 ms vs 400 ms spec

- Indicator Timing Defect: Blink rate at 72 BPM instead of 60 BPM

Impact

The implementation transformed cluster validation from a manual, fragmented activity into a unified, automated engineering workflow.

Regression testing became scalable and reusable across cluster variants, reducing onboarding time for new variants from weeks to days. The availability of structured evidence improved confidence in results and supported compliance requirements.

Conclusion

By integrating CAN simulation, robotic actuation, and AI-driven visual validation, TestBot transformed instrument cluster testing into a fully automated, data-driven engineering workflow.

The NVIDIA Jetson-powered vision system emerged as a key differentiator-eliminating human subjectivity and enabling precise, widget-level validation with measurable accuracy.

This implementation now serves as a standard validation architecture for subsequent automotive cluster programs, significantly accelerating development cycles while ensuring uncompromised quality and compliance.